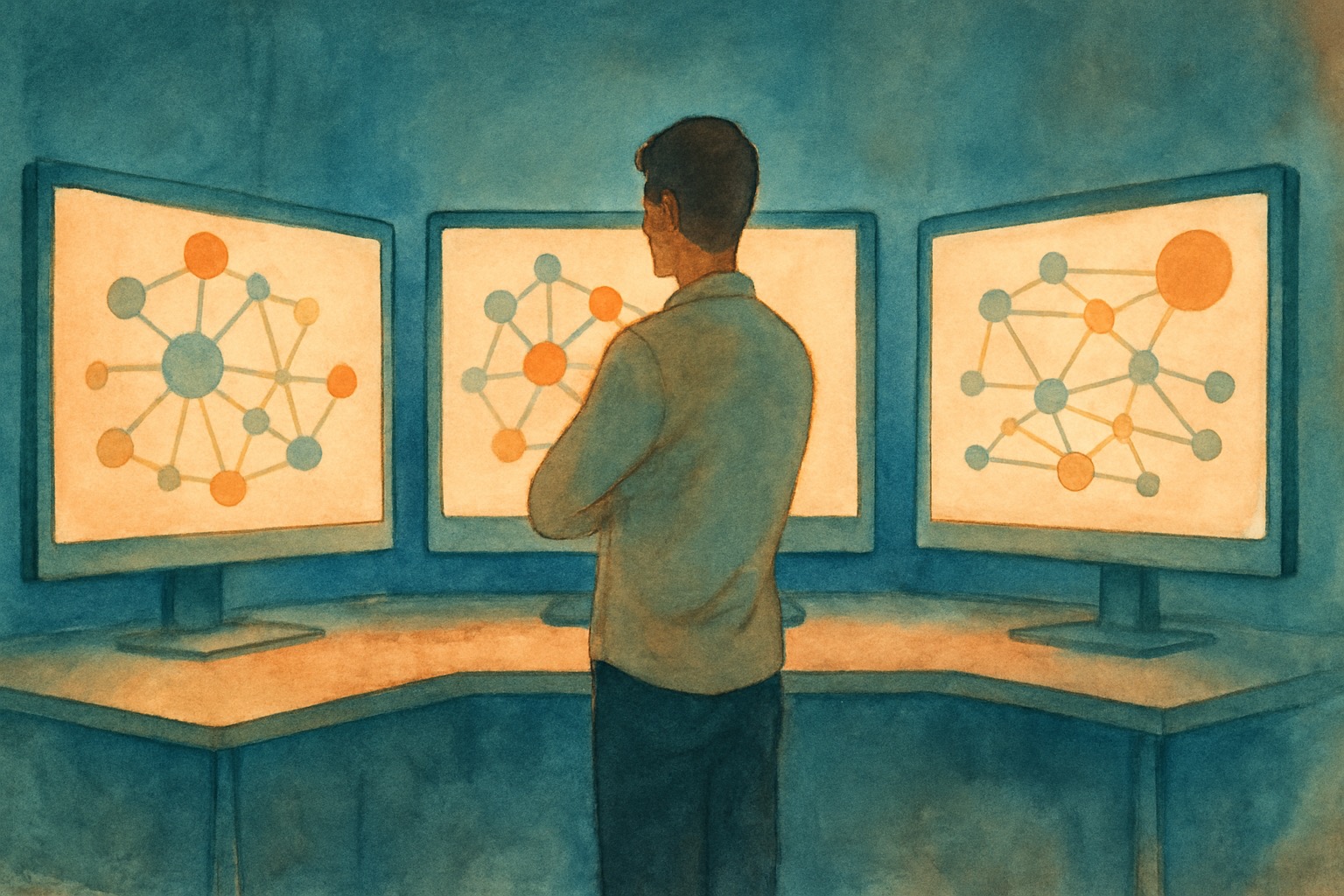

Your agent needs to check a customer's order status, process a refund, and search your product catalog for a replacement. The question that trips up most developers: is that one protocol or two?

If you're connecting the agent to your order database and refund API, that's MCP. If you're handing the product search to a specialist agent built by another team, that's A2A. The confusion is understandable. Both protocols use JSON-RPC. Both were donated to the Linux Foundation. Both have "agent" in every other sentence of their documentation. But they solve different problems at different layers of the stack.

Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. By end of 2026, 40% of enterprise applications will embed task-specific AI agents. The teams building those systems need to understand which protocol handles which connection, or they'll spend months building custom integration code that these standards already solve.

This article draws a clear line between the two protocols, shows how they work at the wire level, and gives you a decision framework for your own architecture. We run MCP in production across voice, chat, and messaging, so the examples come from real systems, not spec documents.

Table of Contents

- The One-Line Distinction

- MCP: The Tool Protocol

- A2A: The Agent Protocol

- The Protocol Stack in Action

- Side-by-Side Comparison

- The Governance Convergence

- Decision Framework: Which Do You Need?

- MCP in Practice with Chanl

- What to Adopt Now

The One-Line Distinction

MCP connects agents to tools. A2A connects agents to agents. Everything else is implementation detail.

Think of it through a hiring analogy. MCP is like giving an employee access to the company's software. They can look up customer records, run reports, process transactions. The software doesn't think. It executes commands and returns results.

A2A is like that employee calling a colleague in another department. "Hey, I need a product recommendation for a customer with a $1,000 budget." The colleague has their own tools, their own context, their own reasoning. They might ask clarifying questions. They might take three minutes or three hours. They return a result, not a data row.

The vertical connection (MCP) is deterministic. You send a request, you get data back. The horizontal connection (A2A) involves reasoning on both sides. The receiving agent decides how to fulfill the request, which tools to use, and how to structure the response.

MCP: The Tool Protocol

MCP is a client-server protocol that lets any AI application discover and call external tools through a standard interface. Anthropic released it in November 2024. By March 2026, the numbers speak for themselves: 10,000+ public servers, 97 million monthly SDK downloads, and native client support in Claude, ChatGPT, Cursor, Gemini, Microsoft Copilot, and VS Code.

How MCP Works

The protocol is built on JSON-RPC 2.0 with a lifecycle that starts with capability negotiation. A client connects to a server, they exchange what each side supports, and then the client can discover and call tools.

MCP servers expose three primitive types. Tools are executable actions (query a database, send an email, process a payment). Resources are read-only data the agent can pull in for context (a document, a configuration file). Prompts are reusable templates that encode best practices for a specific workflow.

Here's a minimal MCP server that exposes a customer lookup tool. The description field is what the LLM reads to decide when to call it:

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

const server = new McpServer({

name: "customer-service",

version: "1.0.0",

});

// The LLM reads this description to decide when to use the tool

server.tool(

"lookup_order",

"Find order details by order ID including status, items, and shipping info",

{ orderId: { type: "string", description: "The order ID to look up" } },

async ({ orderId }) => {

const order = await db.orders.findById(orderId);

return {

content: [{ type: "text", text: JSON.stringify(order) }],

};

}

);That tool description, along with the parameter schema, gets serialized into the LLM's context window. The model reads it, decides the tool is relevant to the user's request, and generates a tools/call JSON-RPC message. The server executes the handler and returns the result.

For a full walkthrough of building MCP servers in both TypeScript and Python, see our hands-on tutorial.

The Context Window Problem

Every tool you register eats tokens. The tool name, description, parameter schemas, and response format all get serialized into the context window before the user says a single word.

At Ask 2026 on March 11, Perplexity CTO Denis Yarats announced the company was moving away from MCP internally. His team measured three MCP servers (GitHub, Slack, Sentry) loading approximately 40 tool schemas that consumed 143,000 of 200,000 available context tokens. That's 72% of the model's working memory gone before processing any user query.

Gil Feig, CTO of Merge, estimates that tool metadata overhead accounts for 40-50% of available context in typical deployments. The numbers vary based on how many tools you load and how verbose your schemas are, but the pattern is consistent: MCP's runtime discovery model front-loads context cost.

The MCP maintainers addressed this directly in the 2026 roadmap published March 9. The fix is MCP Server Cards, a .well-known metadata format that lets clients discover what a server offers without connecting to it. Instead of loading every schema upfront, clients will browse capability metadata and load only what they need. It's the same evolution REST APIs went through: from "return everything" to pagination to sparse fieldsets to GraphQL.

Transport and Scaling

MCP supports two transport layers. stdio pipes messages through stdin/stdout for local tools (an IDE spawning a server as a child process). Streamable HTTP handles remote deployments with bidirectional communication and session management via the Mcp-Session-Id header.

The scaling challenge is that MCP sessions are stateful by default. A server maintains the negotiated capabilities, resource subscriptions, and initialization state per session. That works fine on a single process but breaks behind load balancers. The 2026 roadmap prioritizes stateless session handling so servers can scale horizontally without sticky sessions. For deeper coverage of transport patterns and production MCP architecture, see our advanced MCP patterns guide.

A2A: The Agent Protocol

A2A is a peer-to-peer protocol that lets independent AI agents discover each other, delegate tasks, and share results. Google launched it in April 2025. IBM's competing Agent Communication Protocol (ACP) merged into it by August 2025. In June 2025, Google donated A2A to the Linux Foundation, where it now has its own Technical Steering Committee with representation from Google, Microsoft, AWS, IBM, Cisco, Salesforce, ServiceNow, and SAP.

How A2A Works

Where MCP's fundamental unit is a tool call (execute this function, return the result), A2A's fundamental unit is a task (achieve this goal, figure out how). A task has a lifecycle: it can be submitted, accepted, working, completed, or failed. The receiving agent decides how to accomplish it.

A2A communication also uses JSON-RPC 2.0 over HTTP, but the message patterns are different. Instead of tools/call and tools/list, you're working with tasks/send, tasks/get, and tasks/cancel.

The key difference from a tool call: the receiving agent can push intermediate updates, request additional input, and take an unpredictable amount of time. A tool call is synchronous (call, wait, get result). A task is asynchronous (send, get updates, eventually get result).

Agent Cards: Discovery Without Configuration

A2A's most practical feature is the Agent Card, a JSON document published at /.well-known/agent.json. It describes what the agent can do, what formats it accepts, how to authenticate, and what skills it offers.

Any agent can discover another by fetching its Agent Card. No prior configuration, no shared registry, no API keys exchanged out of band. Here's what a product specialist agent's card looks like:

{

"name": "Product Specialist",

"description": "Search catalogs, compare specs, recommend by budget",

"url": "https://products.example.com/a2a",

"version": "1.0.0",

"defaultInputModes": ["text"],

"defaultOutputModes": ["text"],

"capabilities": {

"streaming": true,

"pushNotifications": true

},

"skills": [

{

"id": "product-search",

"name": "Product Search",

"description": "Find products matching criteria within a budget",

"tags": ["e-commerce", "search", "recommendations"]

}

],

"authentication": {

"schemes": ["oauth2"]

}

}This solves a real problem. In today's multi-agent architectures, connecting agent A to agent B requires custom integration code for every pair. Agent Cards make agent discovery as simple as DNS made hostname resolution: publish a well-known document, and anyone can find you.

Tasks, Messages, and Artifacts

An A2A interaction has three core data structures. A Task is the unit of work with a unique ID and lifecycle state. Messages carry the conversation between agents (user messages from the client, agent messages from the server). Artifacts are the outputs: documents, structured data, images, or any content the server agent produces.

Here's the wire format for sending a task:

{

"jsonrpc": "2.0",

"method": "tasks/send",

"params": {

"id": "task-abc-123",

"message": {

"role": "user",

"parts": [

{

"type": "text",

"text": "Find a laptop under $1000 with 16GB RAM and at least 512GB SSD"

}

]

}

}

}The server agent processes the request using whatever tools and reasoning it needs. It might use MCP internally to query a product database, call a pricing API, and check inventory levels. The client agent doesn't see any of that. It just receives task status updates and, eventually, artifacts containing the results.

That opacity is by design. A2A treats agents as opaque services. You don't need to know what framework, model, or tools the other agent uses. You send a task, you get a result. This is what makes cross-vendor, cross-framework agent collaboration possible.

The Protocol Stack in Action

Most production agent systems will use MCP and A2A together. The orchestrator calls tools directly via MCP for deterministic operations, then delegates reasoning-heavy tasks to specialist agents via A2A. Walk through a concrete example: a customer service system handling "Return my laptop and find me a replacement under $1,000."

The orchestrator agent handles the return itself using MCP. It calls the order management tool to verify the purchase, then calls the refund tool to process the return. These are deterministic operations: look up data, execute a transaction, return a result.

For the replacement search, the orchestrator uses A2A to delegate to a product specialist. That specialist agent runs on a different framework, was built by a different team, and has its own MCP connections to product catalogs and pricing APIs. The orchestrator sends a task ("find a laptop under $1,000 with similar specs") and gets back artifacts (product recommendations with comparisons).

The customer sees one conversation. Behind it, two protocols handle two distinct types of connections.

For more on orchestrating multiple agents in production, see our guide on multi-agent orchestration patterns.

Side-by-Side Comparison

MCP is synchronous, client-server, and deterministic. A2A is asynchronous, peer-to-peer, and involves autonomous reasoning on both sides. The table below compares them across every dimension that matters for architecture decisions.

| Dimension | MCP | A2A |

|---|---|---|

| Purpose | Connect agent to tools | Connect agent to agent |

| Relationship | Client-server | Peer-to-peer |

| Unit of work | Tool call (execute, return) | Task (submit, track, complete) |

| Execution | Synchronous (call/response) | Asynchronous (lifecycle states) |

| Discovery | tools/list after connection | Agent Card at /.well-known/agent.json |

| Intelligence | Server is deterministic | Server agent reasons autonomously |

| Wire protocol | JSON-RPC 2.0 | JSON-RPC 2.0 |

| Transport | stdio, Streamable HTTP | HTTP(S), gRPC (v0.3+) |

| Created by | Anthropic (Nov 2024) | Google (Apr 2025) |

| Governance | AAIF / Linux Foundation | Linux Foundation |

| Adoption | 10,000+ servers, 97M monthly downloads | 100+ enterprise supporters, growing |

| Spec maturity | Production-stable, OAuth 2.1, Tasks primitive | v0.3, gRPC support, security cards |

The simplest test: does the thing you're connecting to think? If no (it's an API, database, or service), use MCP. If yes (it's another agent with its own reasoning), use A2A.

The Governance Convergence

Both MCP and A2A are now governed under the Linux Foundation, backed by every major AI company. There is no standards war. The convergence happened fast.

Anthropic open-sourced MCP in November 2024. Within a year, OpenAI, Google, Microsoft, and Amazon all shipped MCP support. In December 2025, Anthropic, OpenAI, and Block co-founded the Agentic AI Foundation (AAIF) under the Linux Foundation, with Google, Microsoft, AWS, Bloomberg, and Cloudflare joining as supporters. MCP was the founding project.

On the A2A side, Google launched the protocol in April 2025, one month after IBM released its competing Agent Communication Protocol (ACP). By August, ACP merged into A2A under the Linux Foundation. Google donated A2A to the same foundation in June.

The result: no vendor war, no competing standards. MCP handles agent-to-tool connections. A2A handles agent-to-agent coordination. They complement each other by design.

Decision Framework: Which Do You Need?

Start with a simple question: what are you connecting?

You need MCP alone when your agent calls external tools, APIs, or databases directly. A single agent that looks up orders, sends emails, and processes refunds needs MCP connections to those services. Most agent projects start here, and many never need anything else.

You need A2A alone when your agents need to coordinate with agents built by other teams or vendors. If your compliance team publishes an agent that checks regulatory requirements, and your sales team's agent needs to consult it before generating proposals, that's A2A. The agents don't share code, frameworks, or tool access. They communicate through tasks.

You need both when specialist agents each need their own tool access. This is the most common production architecture for complex systems: an orchestrator uses A2A to delegate to specialists, and each specialist uses MCP to call its own tools.

Here's the decision tree:

A practical heuristic: if you have fewer than five agents and they all share a codebase, you probably don't need A2A yet. Direct function calls work fine. A2A's value appears when agents are independently deployed, independently versioned, or built by different teams. The protocol adds overhead that's only justified when you genuinely need cross-boundary interoperability.

MCP in Practice with Chanl

We built Chanl as an MCP-native platform. Every tool you create in Chanl is automatically exposed through a managed MCP server. You don't install, configure, or host anything. The SDK gives you direct access to tools and MCP operations:

import Chanl from '@chanl/sdk';

const chanl = new Chanl({ apiKey: process.env.CHANL_API_KEY });

// List all tools in your workspace

const { data: tools } = await chanl.tools.list();

console.log(`${tools.items.length} tools available`);

// List MCP tools resolved for a specific agent

const { data: mcpTools } = await chanl.mcp.listTools('agent_abc123');

for (const tool of mcpTools.tools) {

console.log(`${tool.name}: ${tool.description}`);

}

// Call a tool directly through the MCP layer

const { data: result } = await chanl.mcp.callTool(

'agent_abc123',

'lookup_order',

{ orderId: 'ORD-7742' }

);

console.log(result.content[0].text);The chanl.tools.list() call returns all tools in your workspace: HTTP endpoints, OpenAPI-imported operations, and custom tools. The chanl.mcp.listTools() call returns the MCP-resolved tools for a specific agent, which includes the tool's schema exactly as the LLM sees it.

The chanl.mcp.callTool() method executes a tool through the full MCP pipeline: schema validation, secret injection, execution, and result formatting. It's the same path that voice and chat sessions use when an agent decides to call a tool during a conversation.

You can also get the full MCP configuration for connecting any MCP client (Claude Desktop, Cursor, or your own) to your workspace:

// Get ready-to-paste MCP configuration

const { data: config } = await chanl.mcp.getConfig();

// config.agentConfigs.claude gives you the exact JSON

// for Claude Desktop's settings file

console.log(JSON.stringify(config.agentConfigs.claude, null, 2));This means every tool you build and test through Chanl's monitoring and scenario testing is immediately available to any MCP-compatible client. Build once, connect everywhere. That's the promise of protocol standards, and MCP delivers on it today.

What to Adopt Now

Both protocols are real, both are governed by the Linux Foundation, and both solve problems you'll encounter if you're building agents for production. Here's the sequencing that makes sense for most teams.

Start with MCP. It's the more mature protocol, with broader tool support and deeper client integration. If your agent needs to call APIs, query databases, or execute any external action, MCP gives you a standard interface that works across every major AI platform. With 10,000+ servers already published, many integrations exist out of the box.

Add A2A when you hit the multi-agent boundary. That boundary is clear: you have agents that need to collaborate but can't share code. They're built on different frameworks, deployed independently, or maintained by different teams. A2A gives those agents a standard way to discover each other and exchange work without custom integration code.

Don't abstract too early. Some teams are building "protocol abstraction layers" that hide MCP and A2A behind a unified interface. That's premature. The protocols solve different problems, and flattening those differences into one API means you lose the semantics that make each useful. Use MCP when you're calling tools. Use A2A when you're delegating to agents. The distinction matters.

Remember the customer who needs to return a laptop and find a replacement? MCP connects your agent to the order database and refund API. A2A routes the product search to a specialist. Two protocols, one conversation, zero custom glue code. That is the stack settling into place.

Give your agent tools, not plumbing

Chanl turns every tool into a managed MCP server. Build tools, test them with [scenario simulations](/features/scenarios), and monitor every call in production. Your agent gets standard tool access across voice, chat, and messaging.

See how MCP works in ChanlCo-founder

Building the platform for AI agents at Chanl — tools, testing, and observability for customer experience.

Learn Agentic AI

One lesson a week — practical techniques for building, testing, and shipping AI agents. From prompt engineering to production monitoring. Learn by doing.