Your evals pass in staging. Every scenario scores above threshold. The CI pipeline glows green. You merge the PR, deploy on Friday afternoon, and head into the weekend feeling good.

Two weeks later, a customer success manager messages you: "The agent has been quoting a refund policy we deprecated in January." You check the logs. No errors. No alerts. The agent confidently told 47 customers they could get full refunds on final-sale items. Your eval suite never caught it because your eval suite tests what you built, not what production became.

This is the eval gap. The distance between "works in staging" and "works in production, continuously, under real conditions." According to the LangChain 2026 State of AI Agents report, 57% of organizations now have agents in production, but quality remains the top barrier to deployment for 32% of respondents. The agents ship. The evals don't follow them.

This guide builds three production eval pipelines that close the gap. Not theoretical frameworks. Working TypeScript code using the Chanl SDK that you can wire into your CI/CD today.

| What you'll build | What it catches |

|---|---|

| Automated scorecards | Quality degradation in production conversations, scored against rubrics with specific criteria |

| Scenario regression | Behavioral regressions before they reach customers, tested with AI personas that exercise edge cases |

| Drift detection | Gradual quality erosion over days and weeks, detected by comparing rolling scores to baselines |

| CI/CD quality gate | Deploys that would lower production quality, blocked before they ship |

Table of contents

- The eval gap between staging and production

- Prerequisites and setup

- Pipeline 1: Automated scorecards

- Pipeline 2: Scenario regression testing

- Pipeline 3: Drift detection

- Wiring it together: CI/CD quality gates

- The production eval playbook

- FAQs

The eval gap between staging and production

Staging evals test your agent against your imagination. Production evals test it against reality. The inputs differ (curated vs. messy), the context differs (static vs. changing), and the failure modes differ (binary vs. gradual drift). Understanding these differences is the first step toward closing the gap.

| Dimension | Dev / Staging Evals | Production Evals |

|---|---|---|

| Input distribution | Curated test cases you wrote | Real customer language, typos, code-switching, emotional escalation |

| Context freshness | Static knowledge base | Policies change, products update, pricing shifts |

| Conversation depth | 3-5 turns per test | 15-30 turns in complex support sessions |

| Edge case coverage | Scenarios you anticipated | Scenarios you never imagined |

| Frequency | On PR, maybe nightly | Continuous sampling of live traffic |

| Failure mode | Binary pass/fail | Gradual drift across multiple dimensions |

| Environment | Isolated, deterministic | Concurrent load, rate limits, tool latency |

Research from InsightFinder found that 91% of ML systems experience performance degradation without proactive intervention. Your agent will drift. The question is whether you detect it before or after your customers do.

Three types of production evals address three types of failures:

- Automated scorecards grade individual conversations against rubrics. They answer: "Is this specific conversation good?"

- Scenario regression runs synthetic conversations before deploy. It answers: "Will this change break anything?"

- Drift detection compares quality scores over time. It answers: "Is quality trending down?"

Each catches failures the others miss. Together, they form a closed loop.

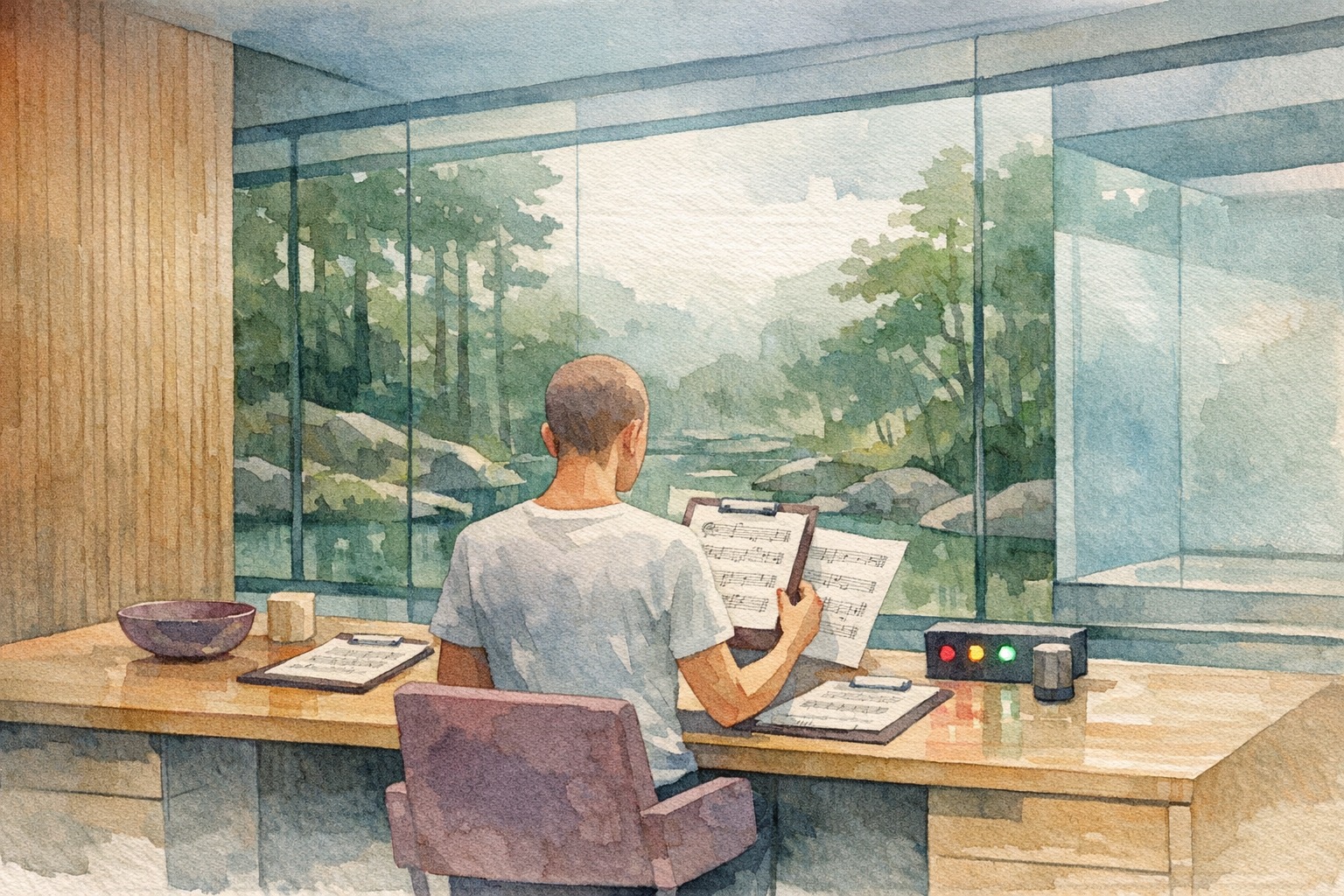

The diagram shows the feedback loop. Production scorecards feed drift detection. Drift detection triggers investigation. Investigation produces new scenarios. Scenarios prevent the same failure from shipping again. Each production incident makes your eval suite stronger.

Prerequisites and setup

You need Node.js 20+, TypeScript 5+, and a Chanl account with API access to follow the code examples. The Chanl SDK handles scorecards, scenarios, and analytics, though the patterns apply to any evaluation infrastructure.

npm install @chanl/sdkConfigure your client with your API key:

import Chanl from '@chanl/sdk';

const chanl = new Chanl({

apiKey: process.env.CHANL_API_KEY,

baseUrl: 'https://platform.chanl.ai',

});You'll also want a cron scheduler (GitHub Actions, or a simple node-cron job) for the drift detection pipeline. We'll get to that setup in Pipeline 3.

If you're new to agent evaluation concepts, How to Evaluate AI Agents: Build an Eval Framework from Scratch covers the foundational theory. This guide assumes you know what evals are and focuses on making them work in production.

Pipeline 1: Automated scorecards

Automated scorecards grade every sampled production conversation against specific criteria you define, giving you a continuous quality signal instead of periodic spot checks. They catch problems like outdated policy references or tone mismatches within days, not weeks.

A scorecard defines what "good" looks like for your agent. Not a vague "was the response helpful?" but specific, anchored criteria: Did the agent cite current policy? Did it resolve the issue without escalation? Did it maintain appropriate tone when the customer was frustrated?

Creating a production scorecard

Start by defining criteria that map to the failures you actually care about. Every team I've worked with starts with too many criteria (12+) and ends up consolidating to the five or six that produce actionable signal.

// create-scorecard.ts

const scorecard = await chanl.scorecards.create({

name: 'Production Quality - Customer Support',

description: 'Grades live customer support conversations across five dimensions',

criteria: [

{

name: 'Accuracy',

description: 'Information provided is factually correct and reflects current policies',

weight: 25,

scoringGuide: `

5: All facts correct, cites current policy, no outdated information

4: All facts correct, minor omission of relevant detail

3: Mostly correct, one factual imprecision that doesn't affect outcome

2: Contains one incorrect fact that could mislead the customer

1: Multiple incorrect facts or references deprecated policy

`,

},

{

name: 'Task Resolution',

description: 'The customer issue was fully addressed without requiring follow-up',

weight: 25,

scoringGuide: `

5: Issue fully resolved, customer confirmed satisfaction

4: Issue resolved, minor loose end acknowledged

3: Partial resolution, customer needs one follow-up action

2: Issue identified but not resolved, escalation needed

1: Issue misidentified or not addressed

`,

},

{

name: 'Policy Adherence',

description: 'Agent follows current company policies and does not make unauthorized commitments',

weight: 20,

scoringGuide: `

5: All statements align with current policy, no unauthorized promises

4: Policy adherent, slightly imprecise language on one policy point

3: Mostly adherent, one statement could be misinterpreted as a commitment

2: Makes one commitment outside policy scope

1: Contradicts active policy or quotes deprecated terms

`,

},

{

name: 'Tone Appropriateness',

description: 'Communication style matches the emotional context of the conversation',

weight: 15,

scoringGuide: `

5: Tone perfectly matches context, empathetic when needed, professional throughout

4: Appropriate tone with one minor mismatch in emotional context

3: Generally appropriate, slightly mechanical in emotional moments

2: Noticeably tone-deaf in one exchange

1: Inappropriate tone that could upset the customer

`,

},

{

name: 'Efficiency',

description: 'Conversation reaches resolution without unnecessary back-and-forth',

weight: 15,

scoringGuide: `

5: Resolves in minimum turns, asks all needed questions upfront

4: Efficient with one unnecessary clarifying question

3: Adequate pacing, one redundant exchange

2: Notable inefficiency, customer repeats information

1: Excessive back-and-forth, customer visibly frustrated by repetition

`,

},

],

});

console.log(`Scorecard created: ${scorecard.id}`);The scoring guides are doing the heavy lifting here. Each level has a concrete, observable anchor. "Contains one incorrect fact that could mislead the customer" is auditable. "Somewhat inaccurate" is not. This specificity is what makes automated grading reliable. If your LLM judge produces inconsistent scores, 90% of the time the problem is vague rubric anchors.

Evaluating production conversations

Once your scorecard exists, evaluate sampled production conversations against it:

// evaluate-conversation.ts

async function evaluateConversation(callId: string, scorecardId: string) {

const result = await chanl.scorecards.evaluate({

callId,

scorecardId,

});

return {

callId,

overallScore: result.overallScore,

criteriaScores: result.criteria.map((c) => ({

name: c.name,

score: c.score,

reasoning: c.reasoning,

})),

evaluatedAt: new Date().toISOString(),

};

}The SDK handles the LLM-as-judge execution, rubric application, and score normalization. You get back per-criterion scores with reasoning, which matters when you're debugging why a score dropped. A bare "3.2" tells you nothing. "Scored 2 on Policy Adherence because the agent quoted the Q3 2025 refund window instead of the current Q1 2026 policy" tells you exactly what to fix.

Sampling strategy for production traffic

You don't need to evaluate every conversation. Statistical significance at scale requires less coverage than you'd think.

// sample-and-evaluate.ts

async function runProductionEvalBatch(

scorecardId: string,

sampleRate: number = 0.05,

) {

// Pull recent conversations

const recentCalls = await chanl.calls.list({

startDate: oneDayAgo(),

endDate: now(),

limit: 1000,

});

// Random sample

const sampled = recentCalls.items

.filter(() => Math.random() < sampleRate)

.slice(0, 50); // Cap at 50 per batch to manage cost

const results = [];

for (const call of sampled) {

const result = await evaluateConversation(call.id, scorecardId);

results.push(result);

// Rate limit: avoid hammering the eval endpoint

await sleep(500);

}

return {

batchSize: results.length,

averageScore: mean(results.map((r) => r.overallScore)),

criteriaAverages: aggregateByCriteria(results),

lowScorers: results.filter((r) => r.overallScore < 3.0),

evaluatedAt: new Date().toISOString(),

};

}At 5% sampling with 1,000 daily conversations, you're evaluating 50 conversations per day. That's enough to detect a 0.3-point quality shift within three days with 95% confidence. If your volume is under 100 conversations daily, evaluate all of them. The cost is negligible and the signal is stronger.

For a deeper dive on scorecard design, rubric calibration, and the theory behind LLM-as-judge scoring, see How to Evaluate AI Agents: Build an Eval Framework from Scratch. The Chanl Scorecards feature handles the infrastructure so you can focus on criteria design.

Pulling scorecard results for analysis

Evaluations accumulate over time. Query them to understand quality trends per agent:

// analyze-results.ts

const results = await chanl.scorecards.listResults({

agentId: 'agent_abc123',

dateRange: {

start: thirtyDaysAgo(),

end: now(),

},

});

// Group by week for trend analysis

const weeklyScores = groupByWeek(results.items);

for (const [week, scores] of Object.entries(weeklyScores)) {

const avg = mean(scores.map((s) => s.overallScore));

const policyAvg = mean(

scores.map((s) => s.criteria.find((c) => c.name === 'Policy Adherence')?.score ?? 0),

);

console.log(`Week ${week}: Overall ${avg.toFixed(2)}, Policy ${policyAvg.toFixed(2)}`);

}This is where the refund policy failure from the opening would have been caught. A weekly policy adherence average dropping from 4.3 to 3.1 is visible in the data days before 47 customers get wrong information.

Pipeline 2: Scenario regression testing

Scenario regression tests run synthetic conversations against your agent before every deploy, catching behavioral regressions before they reach customers. While scorecards evaluate what already happened, scenarios evaluate what is about to happen by exercising edge cases and known failure modes with AI personas.

The idea is straightforward: create AI personas that behave like your trickiest customers, run them against your agent, score the results, and block the deploy if quality drops.

Creating test personas

A good test persona isn't just "angry customer." It's a specific behavioral profile that exercises a specific failure mode.

// create-personas.ts

// Persona 1: Tests policy boundary awareness

const policyProber = await chanl.personas.create({

name: 'Policy Boundary Prober',

traits: {

personality: 'Polite but persistent. Asks follow-up questions that push toward policy edges.',

goal: 'Get a refund on a final-sale item by finding exceptions or loopholes.',

behavior: 'Starts reasonable, then escalates requests incrementally. Cites things friends told them. Asks "but what if..." questions.',

style: 'Conversational, uses casual language, occasionally misspells words.',

},

});

// Persona 2: Tests context retention over long conversations

const contextStresser = await chanl.personas.create({

name: 'Context Window Stresser',

traits: {

personality: 'Friendly but disorganized. Provides information across many turns.',

goal: 'Resolve a billing issue that requires remembering details from early in the conversation.',

behavior: 'Mentions account number on turn 2, problem on turn 5, relevant detail on turn 8. Expects agent to connect all three without repeating.',

style: 'Verbose, includes personal anecdotes between relevant details.',

},

});

// Persona 3: Tests topic-switch handling

const topicSwitcher = await chanl.personas.create({

name: 'Mid-Conversation Switcher',

traits: {

personality: 'Busy, slightly impatient. Has multiple issues and jumps between them.',

goal: 'Resolve both a shipping question and a billing dispute in one conversation.',

behavior: 'Starts with shipping, switches to billing mid-conversation, then asks a follow-up about the original shipping question.',

style: 'Short messages, expects quick answers, occasionally references "what you said earlier."',

},

});

console.log('Personas created:', [policyProber.id, contextStresser.id, topicSwitcher.id]);Each persona targets a specific category of production failure. The Policy Boundary Prober catches the deprecated refund policy problem. The Context Window Stresser catches agent drift failures. The Topic Switcher catches state management bugs. Build your persona library from your actual production incidents.

Running scenario regression

With personas defined, run them as full simulated conversations against your agent:

// run-scenarios.ts

async function runScenarioSuite(agentId: string) {

// Run all scenarios for this agent

const execution = await chanl.scenarios.runAll({ agentId });

console.log(`Suite started: ${execution.id}`);

console.log(`Scenarios: ${execution.totalScenarios}`);

// Poll for completion

let status = execution;

while (status.status === 'running') {

await sleep(5000);

status = await chanl.scenarios.getExecution(execution.id);

console.log(`Progress: ${status.completed}/${status.totalScenarios}`);

}

return status;

}Each scenario in the suite runs a multi-turn conversation between the persona and your agent, then scores the result against the scenario's expected outcomes. The scoring isn't binary pass/fail. It's the same rubric-based evaluation as your production scorecards, which means you can directly compare scenario scores to production scores.

Evaluating individual scenario results

For debugging failed scenarios, pull the full execution detail:

// check-scenario.ts

async function checkScenarioResult(executionId: string) {

const execution = await chanl.scenarios.getExecution(executionId);

for (const result of execution.results) {

const scenario = await chanl.scenarios.get({ scenarioId: result.scenarioId });

console.log(`\n--- ${scenario.name} ---`);

console.log(`Status: ${result.status}`);

console.log(`Score: ${result.score}`);

if (result.score < 3.5) {

console.log(`⚠ Below threshold`);

console.log(`Turns: ${result.turnCount}`);

console.log(`Failed criteria:`);

for (const criterion of result.criteria.filter((c) => c.score < 3)) {

console.log(` - ${criterion.name}: ${criterion.score} - ${criterion.reasoning}`);

}

}

}

return {

passed: execution.results.every((r) => r.score >= 3.5),

failures: execution.results.filter((r) => r.score < 3.5),

averageScore: mean(execution.results.map((r) => r.score)),

};

}When a scenario fails, the per-criterion reasoning tells you exactly what went wrong. "Policy Adherence scored 1: agent quoted the Q3 2025 return window (30 days) instead of the current Q1 2026 policy (14 days for electronics, 30 days for apparel)." That's a specific, fixable finding. Not "quality seems lower."

Building your scenario library from production incidents

The most valuable scenarios come from real failures. Every time production scorecards flag a low-quality conversation, you have the raw material for a new regression test.

// incident-to-scenario.ts

async function createScenarioFromIncident(

lowScoredCallId: string,

agentId: string,

) {

// Pull the conversation that scored poorly

const call = await chanl.calls.get({ callId: lowScoredCallId });

// Create a persona that mimics the customer's behavior

const persona = await chanl.personas.create({

name: `Regression - ${call.summary?.substring(0, 50)}`,

traits: {

personality: 'Mirrors the customer behavior from the flagged conversation',

goal: call.summary || 'Reproduce the failure scenario',

behavior: `Follows a conversation pattern similar to call ${lowScoredCallId}`,

style: 'Natural conversational style',

},

});

// Create a scenario that tests this specific failure mode

const scenario = await chanl.scenarios.create({

name: `Regression: ${call.failureCategory || 'Quality Drop'} - ${new Date().toISOString().split('T')[0]}`,

agentId,

personaId: persona.id,

expectedOutcome: 'Agent handles this correctly after the fix',

});

return { persona, scenario };

}This creates a ratchet effect. Every production failure becomes a regression test. The longer your system runs, the more scenarios it accumulates, and the harder it becomes for old bugs to resurface. After six months of this practice, most teams have 40-60 scenarios that collectively test every failure mode they've encountered.

For more on designing effective test scenarios and the role of AI personas, see our scenario testing guide. The Chanl Scenarios feature runs these as scheduled suites with built-in scoring.

Pipeline 3: Drift detection

Drift detection compares rolling quality scores against a baseline to answer one question: is your agent getting worse over time? You pull scorecard results across a window, compute the statistical distance from your known-good baseline, and alert when the gap crosses a threshold. The subtlety is choosing the right windows, the right thresholds, and avoiding false alarms.

Establishing a baseline

Your baseline is the quality level you've validated and accepted. Typically, it's the average of your first two stable weeks of production scorecard data.

// baseline.ts

async function establishBaseline(agentId: string): Promise<Baseline> {

// Pull the first two weeks of production scores

const results = await chanl.calls.getMetrics({

agentId,

dateRange: {

start: fourteenDaysAgo(),

end: now(),

},

});

const scores = results.qualityScores;

return {

overall: {

mean: mean(scores.map((s) => s.overall)),

stdDev: standardDeviation(scores.map((s) => s.overall)),

},

byCriterion: Object.fromEntries(

['accuracy', 'taskResolution', 'policyAdherence', 'tone', 'efficiency'].map(

(criterion) => [

criterion,

{

mean: mean(scores.map((s) => s[criterion] ?? 0)),

stdDev: standardDeviation(scores.map((s) => s[criterion] ?? 0)),

},

],

),

),

sampleSize: scores.length,

period: {

start: fourteenDaysAgo().toISOString(),

end: now().toISOString(),

},

};

}Comparing rolling windows to baseline

Once you have a baseline, compare the latest week against it:

// drift-check.ts

async function checkForDrift(

agentId: string,

baseline: Baseline,

): Promise<DriftReport> {

// Pull the last 7 days of scores

const recent = await chanl.calls.getMetrics({

agentId,

dateRange: {

start: sevenDaysAgo(),

end: now(),

},

});

const recentScores = recent.qualityScores;

const recentMean = mean(recentScores.map((s) => s.overall));

// Calculate drift magnitude

const drift = baseline.overall.mean - recentMean;

const driftInStdDevs = drift / baseline.overall.stdDev;

// Per-criterion analysis

const criterionDrift = Object.entries(baseline.byCriterion).map(

([criterion, baselineStats]) => {

const recentCriterionMean = mean(

recentScores.map((s) => s[criterion] ?? 0),

);

const criterionDriftVal = baselineStats.mean - recentCriterionMean;

return {

criterion,

baselineMean: baselineStats.mean,

recentMean: recentCriterionMean,

drift: criterionDriftVal,

severity: classifyDrift(criterionDriftVal, baselineStats.stdDev),

};

},

);

return {

agentId,

baselineMean: baseline.overall.mean,

recentMean,

overallDrift: drift,

driftInStdDevs,

severity: classifyDrift(drift, baseline.overall.stdDev),

criterionDrift,

sampleSize: recentScores.length,

period: {

start: sevenDaysAgo().toISOString(),

end: now().toISOString(),

},

};

}

function classifyDrift(

drift: number,

stdDev: number,

): 'none' | 'warning' | 'critical' {

const deviations = drift / stdDev;

if (deviations >= 2) return 'critical';

if (deviations >= 1) return 'warning';

return 'none';

}The 1-sigma / 2-sigma thresholds aren't arbitrary. They map to a practical reality: one standard deviation of drift is a shift that a careful human reviewer would notice. Two standard deviations is a shift that customers will complain about. Adjusting these thresholds up reduces false alarms but increases detection latency. Start aggressive and loosen based on your false positive rate.

Running drift detection on a schedule

Wire the drift check into a scheduled job that runs daily or weekly:

// drift-monitor.ts

async function runDriftMonitor(agents: string[], baseline: Map<string, Baseline>) {

const reports: DriftReport[] = [];

for (const agentId of agents) {

const agentBaseline = baseline.get(agentId);

if (!agentBaseline) {

console.warn(`No baseline for agent ${agentId}, skipping`);

continue;

}

const report = await checkForDrift(agentId, agentBaseline);

reports.push(report);

if (report.severity === 'critical') {

await sendAlert({

channel: 'pagerduty',

title: `Critical quality drift: agent ${agentId}`,

body: formatDriftReport(report),

});

} else if (report.severity === 'warning') {

await sendAlert({

channel: 'slack',

title: `Quality drift warning: agent ${agentId}`,

body: formatDriftReport(report),

});

}

// Log per-criterion breakdown for dashboarding

for (const criterion of report.criterionDrift) {

if (criterion.severity !== 'none') {

console.log(

` ${criterion.criterion}: ${criterion.baselineMean.toFixed(2)} → ${criterion.recentMean.toFixed(2)} (${criterion.severity})`,

);

}

}

}

return reports;

}The per-criterion breakdown is what makes drift detection actionable. "Overall quality dropped 0.4 points" tells you something is wrong. "Policy Adherence dropped 1.2 points while all other criteria held steady" tells you exactly where to look. In the refund policy scenario, the Policy Adherence criterion would have been the canary.

For more on production monitoring patterns and how quality metrics connect to operational health, see AI Agent Observability: What to Monitor When Your Agent Goes Live and the Chanl Analytics dashboard.

Wiring it together: CI/CD quality gates

Connect all three pipelines into your deploy workflow so that scenario regression blocks bad PRs, scorecards run continuously on production traffic, and drift detection alerts you to gradual degradation. The real payoff is the feedback loop: production incidents become new scenarios that prevent the same failure from shipping again.

GitHub Actions integration

Here's a complete CI/CD workflow that blocks deploys when agent quality would degrade:

# .github/workflows/agent-eval.yml

name: Agent Quality Gate

on:

pull_request:

paths:

- 'prompts/**'

- 'agents/**'

- 'tools/**'

- 'config/agent-*.yaml'

jobs:

scenario-regression:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

- name: Install dependencies

run: npm ci

- name: Run scenario regression suite

env:

CHANL_API_KEY: ${{ secrets.CHANL_API_KEY }}

run: npx ts-node scripts/run-regression.ts

- name: Post results to PR

if: always()

uses: actions/github-script@v7

with:

script: |

const fs = require('fs');

const results = JSON.parse(fs.readFileSync('eval-results.json', 'utf-8'));

const body = `## Agent Eval Results

**Overall**: ${results.passed ? '✅ Passed' : '❌ Failed'}

**Average Score**: ${results.averageScore.toFixed(2)} / 5.0

**Scenarios**: ${results.passedCount}/${results.totalCount} passed

${results.failures.length > 0 ? `### Failures\n${results.failures.map(f =>

`- **${f.name}**: ${f.score.toFixed(2)} - ${f.failureReason}`

).join('\n')}` : ''}

${results.warnings.length > 0 ? `### Warnings\n${results.warnings.map(w =>

`- **${w.name}**: ${w.score.toFixed(2)} - ${w.note}`

).join('\n')}` : ''}`;

github.rest.issues.createComment({

issue_number: context.issue.number,

owner: context.repo.owner,

repo: context.repo.repo,

body,

});The regression runner script

The script that the CI job calls:

// scripts/run-regression.ts

import Chanl from '@chanl/sdk';

import { writeFileSync } from 'fs';

const chanl = new Chanl({

apiKey: process.env.CHANL_API_KEY!,

baseUrl: 'https://platform.chanl.ai',

});

const QUALITY_THRESHOLD = 3.5;

const CRITICAL_THRESHOLD = 3.0;

async function main() {

const agentId = process.env.AGENT_ID || 'agent_production_support';

// Run the full scenario suite

const execution = await chanl.scenarios.runAll({ agentId });

// Wait for completion

let status = await chanl.scenarios.getExecution(execution.id);

while (status.status === 'running') {

await new Promise((r) => setTimeout(r, 5000));

status = await chanl.scenarios.getExecution(execution.id);

}

// Analyze results

const results = {

passed: status.results.every((r) => r.score >= QUALITY_THRESHOLD),

averageScore: mean(status.results.map((r) => r.score)),

totalCount: status.results.length,

passedCount: status.results.filter((r) => r.score >= QUALITY_THRESHOLD).length,

failures: status.results

.filter((r) => r.score < CRITICAL_THRESHOLD)

.map((r) => ({

name: r.scenarioName,

score: r.score,

failureReason: r.criteria

.filter((c) => c.score < 3)

.map((c) => `${c.name}: ${c.reasoning}`)

.join('; '),

})),

warnings: status.results

.filter((r) => r.score >= CRITICAL_THRESHOLD && r.score < QUALITY_THRESHOLD)

.map((r) => ({

name: r.scenarioName,

score: r.score,

note: r.criteria

.filter((c) => c.score < 4)

.map((c) => `${c.name}: ${c.score}`)

.join(', '),

})),

};

// Write results for the CI job to read

writeFileSync('eval-results.json', JSON.stringify(results, null, 2));

// Exit with appropriate code

if (!results.passed) {

console.error(`\n❌ Quality gate failed. ${results.failures.length} scenarios below threshold.`);

process.exit(1);

}

console.log(`\n✅ All ${results.totalCount} scenarios passed. Average: ${results.averageScore.toFixed(2)}`);

}

function mean(values: number[]): number {

return values.reduce((sum, v) => sum + v, 0) / values.length;

}

main().catch((err) => {

console.error('Regression suite failed:', err);

process.exit(1);

});The path filter in the GitHub Actions config is important. You don't need to run agent evals on every PR. Only PRs that touch prompts, agent configuration, tools, or related config files trigger the quality gate. This keeps CI fast for unrelated changes while enforcing evaluation on every change that could affect agent behavior.

The feedback loop in practice

The key mechanic is the ratchet: every production incident becomes a regression scenario. When drift detection flags a Policy Adherence drop, you investigate, find the root cause, and write a scenario that catches that exact failure. Next deploy, the scenario suite includes it. The eval suite gets stronger with every failure it catches.

For a broader look at what to monitor beyond quality scores, What to Trace When Your AI Agent Hits Production covers the operational observability layer that complements eval pipelines. The Chanl Monitoring feature provides the real-time dashboard for tracking these metrics across all your agents.

The production eval playbook

Go from zero to full production eval coverage in four weeks: scorecards first for data, baselines second for thresholds, scenarios third for regression, CI/CD last for enforcement. Here is the week-by-week breakdown.

Week 1: Establish scorecards. Create one scorecard with four to six criteria. Start evaluating 100% of production conversations (if volume is under 100/day) or 10% (if higher). Don't alert on anything yet. Just collect data.

Week 2: Set baselines. After one to two weeks of data, compute your baseline means and standard deviations per criterion. These become your thresholds. Enable drift detection with warning alerts only (Slack, not PagerDuty).

Week 3: Build scenarios from data. Look at your lowest-scoring conversations from weeks 1-2. Build 10-15 regression scenarios from them. Create personas that exercise the specific failure patterns you found. Run the suite manually to establish scenario baselines.

Week 4: Wire CI/CD. Add the GitHub Actions quality gate. Set the threshold conservatively (3.0) and tighten over time. Enable critical drift alerts. You now have pre-deploy and post-deploy coverage.

Ongoing: Ratchet. Every production incident becomes a regression scenario. Review drift reports weekly. Tighten thresholds as quality improves. Re-baseline quarterly or after major agent changes.

The key insight is that production evals are not a one-time project. They're an operational practice, like monitoring or incident response. The eval suite grows with your agent. The baselines shift as quality improves. The scenarios accumulate as you encounter (and fix) new failure modes.

| Week | Action | Outcome |

|---|---|---|

| 1 | Deploy scorecards, evaluate all traffic | Raw quality data flowing |

| 2 | Compute baselines, enable warning alerts | Know what "normal" looks like |

| 3 | Build 10-15 scenarios from low-scoring conversations | Regression coverage for known failures |

| 4 | Wire CI/CD quality gate | Deploys blocked when quality would drop |

| Ongoing | Incident-to-scenario ratchet | Suite gets stronger with every failure |

The teams that do this well share one trait: they treat their eval suite with the same seriousness as their test suite. Eval coverage is tracked. Eval regressions are bugs. Eval gaps are technical debt. When a production failure surfaces that no eval caught, the first question is: "Why didn't we have a scenario for this?" And the first action is to write one.

Remember those 47 customers who got quoted a deprecated refund policy? With a production scorecard tracking Policy Adherence, the score drop from 4.3 to 3.1 would have been visible within days. With a drift alert at 1-sigma, the team would have investigated before customer number 5, not after customer number 47. That is the difference between evals that test what you built and evals that watch what production became.

Start catching quality drift today

Chanl scorecards, scenario regression, and drift detection run continuously across your production agents. Define your rubrics, build your scenario library, and get alerts before your customers notice.

See how production evals workFAQs

Why do my AI agent evals pass in staging but fail in production?

Staging evals use curated inputs that cover expected paths. Production traffic includes typos, topic switches, emotional escalation, non-English fragments, and context that changes week to week (new products, updated policies, seasonal promotions). Your staging evals test what you imagined. Production reveals what customers actually do.

How many scorecard criteria should a production eval have?

Start with four to six criteria covering accuracy, policy adherence, tone, completeness, and task resolution. More than eight criteria increases evaluation cost without proportional signal. Each criterion needs specific anchors at every score level (1-5). Vague criteria like "was the response good?" produce unreliable scores.

How often should I run scenario regression tests?

Run the full suite before every deploy (in CI/CD). Run a subset of high-risk scenarios nightly against production. Weekly is the minimum cadence for catching drift. If you deploy multiple times per day, run a smoke subset of 5-10 critical scenarios per deploy and the full suite nightly.

What's a meaningful quality score drop that should trigger an alert?

A sustained drop of 0.3 points or more on a 5-point scale over a one-week rolling window signals real degradation, not noise. Single-day drops of 0.5+ warrant immediate investigation. Set warning thresholds at 1 standard deviation below baseline and critical thresholds at 2 standard deviations. Calculate these from your first two weeks of production data.

Can I run production evals without increasing my LLM costs significantly?

Yes. Sample 5-10% of production conversations for scorecard evaluation. Use a cheaper model (like GPT-4o-mini) as your judge when correlation with your primary model stays above 90%. Batch evaluations during off-peak hours. A typical production eval pipeline adds 3-8% to total LLM costs.

How do I handle non-determinism in AI agent evaluations?

Run each evaluation three times and take the median score. If scores swing more than 1.0 between runs on the same conversation, tighten your rubric anchors with more concrete examples at each level. Use temperature 0 for your judge model. Track score variance as a metric itself, since high variance indicates your rubric needs work.

What's the difference between agent drift and model drift?

Model drift happens when the underlying model changes (provider updates, fine-tuning decay) over weeks or months. Agent drift happens within a single conversation as context windows fill and attention patterns shift. Production eval systems need to catch both: scorecards on sampled traffic detect model drift, while scenario tests at varying conversation depths detect agent drift.

Should I block deploys automatically when eval scores drop?

Yes, for scenarios that test critical paths (billing, refunds, safety). Set a hard gate: if any critical scenario scores below your threshold, the deploy fails. For non-critical scenarios, use a warning that requires manual override. Start strict and loosen as you build confidence in your thresholds. A blocked deploy is cheaper than a production incident.

Co-founder

Building the platform for AI agents at Chanl — tools, testing, and observability for customer experience.

Aprende IA Agéntica

Una lección por semana: técnicas prácticas para construir, probar y lanzar agentes IA. Desde ingeniería de prompts hasta monitoreo en producción. Aprende haciendo.